All talk

How can we get AI to reason correctly?

As AI researchers sometimes remind us, we must take care not to be beguiled by fluency. The Turing Test was never a true test of intelligence, after all, just of the ability to sound as if you’re intelligent.

Plenty of human exhibit that trait too. A friend’s son, at the age of three, coming to my home for the first time, looking around and then said: ‘Dave, I haven’t been to this house for some considerable time.’ He had never been before, in fact, but the way he said it sounded perfectly thought-out*. Even adult humans can mask a want of intelligence with a well-crafted phrase. Indeed, some facet of intelligence seems to be embedded in the linguistic patterns we learn.

But linguistic capability doesn’t necessarily correspond to an ability to reason. It can just allow sophistry. An LLM presented with the Tower of Hanoi problem will confidently give an answer that is highly likely to be wrong when the number of disks gets to more than three or four. Ask it to write code to solve the problem, however, and that code will give correct answers for any number of disks.

This is not surprising. It’s exactly what you’d find in a human if you demanded they give you an answer to the problem off the top of their head without recourse to mental arithmetic or the back of an envelope. There’s nothing new about any of this. Descartes wrote about it four hundred years ago in Discours de la Méthode:

‘I esteemed eloquence highly, and was in raptures with poesy; but I thought that both were gifts of nature rather than fruits of study. Those in whom the faculty of reason is predominant, and who most skillfully dispose their thoughts with a view to render them clear and intelligible, are always the best able to persuade others of the truth of what they lay down, though they should speak only in the language of Lower Brittany, and be wholly ignorant of the rules of rhetoric.’

Some reasoning seems to be embedded in language, but only the reasoning already contained in familiar patterns. New thought is not innate in those patterns. On the other hand, when using maths and writing code, LLMs and humans are able to reason through a new problem that would be beyond their unaided intelligence. We can solve equations correctly and thereby reach new discoveries without ever really understanding the underlying reality.

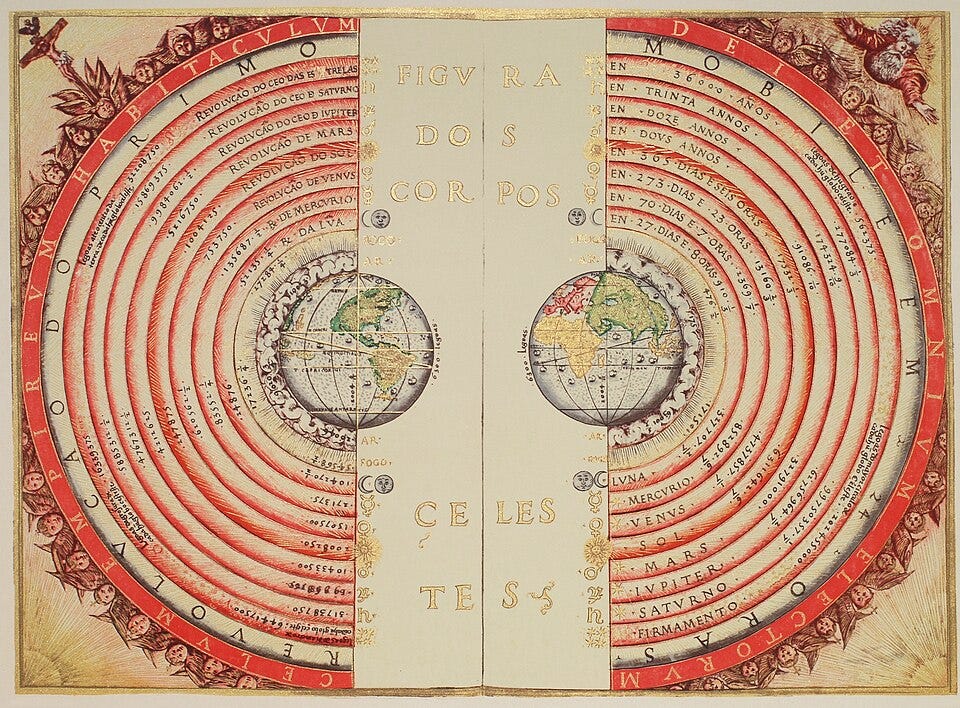

I was going to mention quantum mechanics, but let’s go in the other direction. Take the motion of the planets. When you have Newton’s Laws, the problem is relatively simple (for widely separated planets, anyway) but, without any theory or mathematics, people used to come up with completely harebrained and overcomplicated explanations. Maths differs from language in that there are formal rules, and those rules drive the statement to an inescapable and meaningful conclusion.

Shown the motion of the Moon around the Earth and asked where the forces are, an AI will typically guess at an answer as muddled as any medieval thinker. (Don’t get smug; there are humans who think the Earth is flat.) So how can we get AIs to reason their way through complex real-world problems? A clue might come from the way rogue waves in mid-ocean are studied. First an AI is trained to predict when the rogue waves will occur purely by pattern matching. Then another algorithm attempts to find an equation that matches the predictions of the first AI using the most likely variables.

If we tried that on the orbital mechanics problem, using the variables of mass and distance, the second AI would soon derive the law of gravitation as long as it knew the laws of motion – and it could have derived those too if presented first with simpler dynamics cases.

In a human, what happens then is we’ll look at our equation and ask ourselves, ‘What’s really going on here..?’ And we can do the same thing with generative AI, using the derived equation to recalibrate the ‘grammar’ of the physics model – ‘Ah, so it involves the product of the masses and the square of the separation between them.’ (Not that simplistic in fact, but you get the idea.) Then, in the same way that an LLM learns to evaluate words in context, it can now be trained to evaluate all the various parameters of the problem domain we’re interested in. Those can be a lot gnarlier than orbital mechanics. Think of weather, investment strategy, genetics, plasma confinement. And, yes, quantum interactions.

Such problems may be capable of being handled by the kind of ‘intuitive’ thinking that characterizes generative AI. All that is necessary, as Descartes said, is a method for rightly conducting the reason.

* Interestingly, that was how he talked having been brought up with two well-educated parents and three older siblings in a bookish family. Once he started going to school with children his own age his conversation was no longer so quaintly erudite. Then he sounded dumber — just like any other six-year-old kid.

Maybe. More likely, though, LLMs just aren't the answer at all.

Just in the last week both Demis Hassabis and Yann LeCun have seemingly moved towards the position popularised by Gary Marcus that what AI needs is world models. Maybe if some of the huge buckets of venture capital can be moved across we might see some progress there?